AI in the age of ChatGPT

A synopsis of the process of building AI products using Large Language Models, their potential value, current limitations and some advice on ensuring they work for your use-case.

It’s not exactly news that there’s a tectonic shift in happening right now in the field of AI. This can largely be credited to the emergence of Large Language Models (LLMs) and specifically the introduction of OpenAI’s ChatGPT. In fact, it has become the fastest growing application in history. AI has undeniably become a prominent part of technology and society, if it wasn’t already.

The excitement among researchers, entrepreneurs, investors and the public at large is palpable. Even the post-covid downturn in tech hasn’t affected the field as much as others. Investments in new AI startups and research labs are at an all-time high.

The hype surrounding AI has, however, made it increasingly challenging to discern the “signal from noise.” A cottage industry of articles, videos, courses, and AI-related services that claim to teach how to integrate ChatGPT-like features into applications or even develop the next ChatGPT has emerged. Inevitably, there is a lot of AI “snake oil” out there.

In this context, navigating the authenticity of information has become increasingly essential due to its sheer volume and potential impact on the growth of the field.

Developing AI-enabled Products

Given this backdrop, companies who want to make use of AI in their product line are faced with a daunting task. Although the possibilities of LLMs seem endless at first, once the initial novelty of wears off — the real complexity of the process of putting them in production dawns in.

Every team starting out in this journey will inevitably be faced with a few universal questions. To make LLM models truly usable, you will need to answer most of these questions.

- Do we use ChatGPT, or are other vendors a better fit?

- Do we explore Llama2 and other open-source LLMs instead?

- Will the models be able to learn from my specific data to fit my use-cases?

- How do I ensure my proprietary data is protected when I send them over an API?

- Is using a LLM really going to enhance my product experience?

- How do I ensure the results from the models are fact based and not hallucinating?

Customizing or fine-tuning the model to your specific domain and dataset is another major aspect of building with LLMs. This comes its own set of nuances and requires a clear understanding your own domain, and whether the LLM model allows you to do that.

In this article, I will distill down the LLM / AI paradigm and provide a more practical blueprint for implemented it’s in your own products.

Software 2.0

LLMs have proven to have real-world applications across a variety of industries. One notable example is GitHub Copilot, which utilizes LLM technology to assist developers in writing code. These advancements are not just incremental improvements but represent an entirely new class of software that was previously impossible or only achievable through large investments. Some have even called this Software 2.0.

In many ways, the current phase of AI can be likened to the dot-com boom of the late 1990s. Back then the Internet hype was at its peak. The potential was real. But since it was so early, a large number of startups came and went, with only a handful of them surviving the dot-com crash of the early 2000s.

Like that period, the industry is still in the early stages of understanding best practices and exploring profitable use-cases. A major obstacle for companies is how different it is to build AI products compared to traditional software development.

A new way of building

The first major difference between traditional software and AI systems is their non-deterministic nature. Software systems (if written well) are considered deterministic. This means that, given a particular state of the system, it will give the same output for the same input. Most software testing is based on this premise.

However, AI systems are probabilistic, meaning they may not always produce the same output for identical inputs. LLMs that belong to the “generative AI” family of AI models, are no different. An example is how ChatGPT can give different answers at different times to the same question.

Another difference is the need for specialized hardware, such as GPUs, that are required for run AI models. The may also be a need to re-train models with fresh data as their performance degrades over time. This is counter to convential thinking, where the more the software is used the better its value becomes. Hence, it has some unique learning curves, beyond what is usually present in traditonal software systems.

This new way of building software systems can catch people new to the field off-guard. Thus, as the industry continues to evolve, it is crucial to explore and establish best practices for harnessing the potential of LLMs while addressing the challenges they present.

Just like today’s internet behemoths like Google, Facebook, Salesforce etc were born in the wake of the dot-com crash. Companies that can figure this new way of building will become the behemoths of the AI age.

ChatGPT and Friends

After OpenAI released ChatGPT for general use in late 2022, they have effectively beccome the market leader in the LLM based AI domain. Other competitors and research labs like Anthropic, Adept, Cohere etc. have also released similar proprietary LLM APIs, along with big tech companies like Microsoft and Google.

The increasing competition in the AI/LLM space has brought down the prices they charge significantly. Thus, making these powerful models available to individuals and smaller companies.

Given the low barrier to entry, these proprietary APIs provide the best way to test the waters to see if LLMs bring any value to your company and your product features. But there are a few things to keep in mind before betting your whole product or company strategy around these APIs.

Thin wrappers

Most of the examples you will see in AI are essentially “thin wrappers” around the ChatGPT (or similar) API. What this means is that the product relies mostly on giving the model a “prompt” that is relevant to the context. The resulting user experience is based on a slightly post-processed version of the LLM output.

Relying solely on these APIs comes with risks, such as vulnerability to changes made by the service providers themselves. This could be seen in the recently concluded OpenAI DevDay event, where they introduced a bunch of new features that make it easy for users to upload their data into OpenAI’s API and start asking it questions.

Thus, it is advisable that your product not rely on any particular model, but rather use it as part of a larger system that you control.

Data privacy concerns

Data privacy has become a huge concern in the ChatGPT era. Since, using LLMs relies on users sending data in the form of prompts or fine-tuning jobs. This is especially a concern for enterprises. They generally have strict data sharing policies and compliance requirements.

To remain compliant, you should make provisions to ensure any private user data or your company’s proprietary data does not leak into the APIs.

Data security and privacy are very important and far-reaching topics, and I plan to publish an entire article on data privacy in AI in the future.

Time lag in API responses

Another often overlooked fact about LLM outputs is that they aren’t instant. These models can have billions of parameters and need to look at the prior context of the chat or questions. This means that there can be a delay between sending API requests and receiving replies. A delay like this may not be acceptable in your use case.

A Herd of Llamas

Using a proprietary LLM or pre-training a foundational model from scratch may not always be viable for you. In that case, working with an openly available pre-trained model is your best option.

The Llama family of models from Meta (formerly Facebook) is the most popular among these “open” models. Other popular open models are Mistral 7B from Mistral, Alapaca from Stanford, Vicuna 13B etc.

One thing to remember is that even though these models are referred to as open-source in most places (including here), they are mostly “open-weight”. This means the model artifacts are made available with some documentation, but not necessarily the code used to train them.

Despite the real advantages of these models, like giving you full control over how you use and deploy them, there are some considerations to be taken into account with them.

High cost

Even though these models are pre-trained, they are normally trained on very general “internet” data and are thus not always usable for your use case out of the box. Thus, they require fine-tuning on your custom data and domain. Fine-tuning LLM models and apps still requires a lot of hardware and specialized know-how. For example, the best-performing of these models, like the Llama2 70B, require significant disk space, memory, and compute hardware like GPUs.

Thankfully, strides have been made to fit these models into smaller machines through techniques like weight quantization, decreasing the number of parameters without a large loss in performance, and specialized tools like Llama.cpp. I believe the cost of fine-tuning and deploying LLMs will come down significantly in the near future.

Production woes

Another challenge you may quickly face is the lack of standard architectures and best practices for making the most of these models in production. Even when you have a well-performing model, the process of generating output from these models (inference) has its own unique set of challenges.

Deploying AI models into production entails not only managing code, but also the models and the data used to train them. Most teams aren’t knowledgeable about these unique scale and parallelization challenges for AI apps. Again, there have been huge strides made by cloud providers in these domains, and it should get easier as the field converges around particular tools and practices.

Geopolitical Undertones

Though not talked about as much, AI has also become a geopolitical issue. This can be especially seen between the US and China as they compete for AI supremacy.

Without going much deeper into this, access to LLM APIs may be restricted in certain countries due to geopolitical factors, limiting their availability and usage.

Also, the use of these models can be subject to national regulations and compliance requirements. This is especially the case in domains like Healthcare and Finance.

Hallucinations

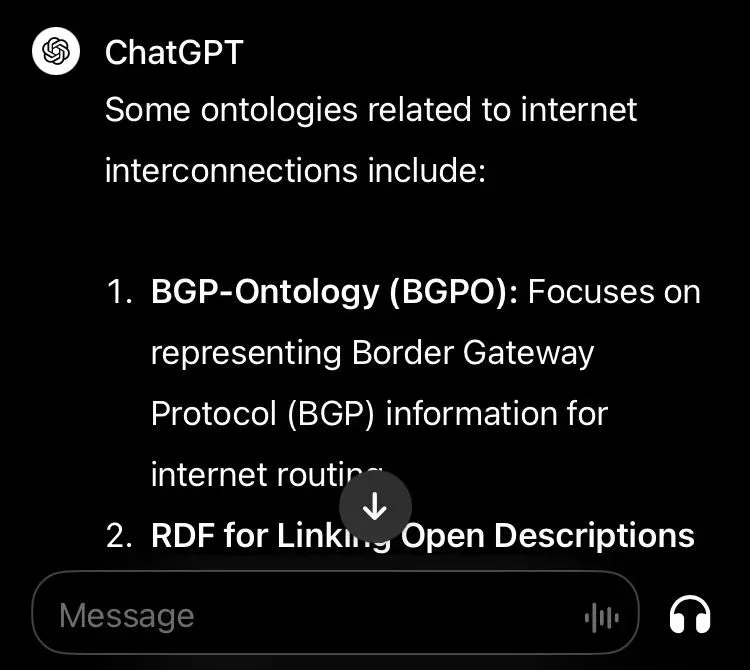

As powerful as LLMs are, they suffer from a fundamental limitation. As stated earlier they are probabilistic in nature, and optimized on the tasks like next word prediction and question answering. This can often lead to them producing outputs that are factually wrong, without really any grounding in reality.

So, the LLM can generate texts that is made up. But it is going to present it in a way that appears real and confident. This phenomenon is called “hallucination”. As we understand it now, the lack of reasoning ability in LLMs is what causes this. Users need to be aware of this limitation when using these models.

New methods like Retrieval Augmented Generation (RAG), and other ways to reference the exact data the output is generated from are being developed to mitigate this. It is far from being solved though, and will be vital for the large scale adoptions of LLMs.

When NOT LLMs?

Throughout this article, we looked into the challenges of using LLMs. But it is also important to know when LLMs are not suitable for your application. LLMs are “generative” models that can be customized for tasks like chat, question answering, etc. Not all products will fit into this mold. There are still a large number of use-cases that can be solved using descriptive, predictive, time-series or even rule based models.

You should consider other traditional predictive AI models or even coding rules by hand based on your needs. The time and cost of developing a useless LLM model is better spent on building actual value for your customers and not following mere gimmicks.

Conclusion

So, to bring this home, we looked at how new LLM apps are being built, the value they bring and the challenges they face.

The main points of this article can be summarized as follows:

- It is very early days in the LLM age. The true value of these models and best practices on using them are still being discovered.

- LLMs are not just another way to add AI to your products; they represent a whole new way of creating software products.

- The market is led by proprietary LLM API companies like OpenAI, but “open-weight” models led by Llama2 from Meta are quickly catching up.

- Both of these options have their own challenges. Which one is most viable depends on the needs and restrictions of your own use-case.

- Generally, it’s a lot easier and more cost-effective to start with a proprietary API, confirm that using LLMs benefits your product, and then choose either of the two options.

- Just because they are available doesn’t mean LLMs will fit your requirements. Use general AI models or hand code logic if that is more viable.

In future posts, I will delve into these topics with in-depth technical deep dives, AI product development strategies, and much more.